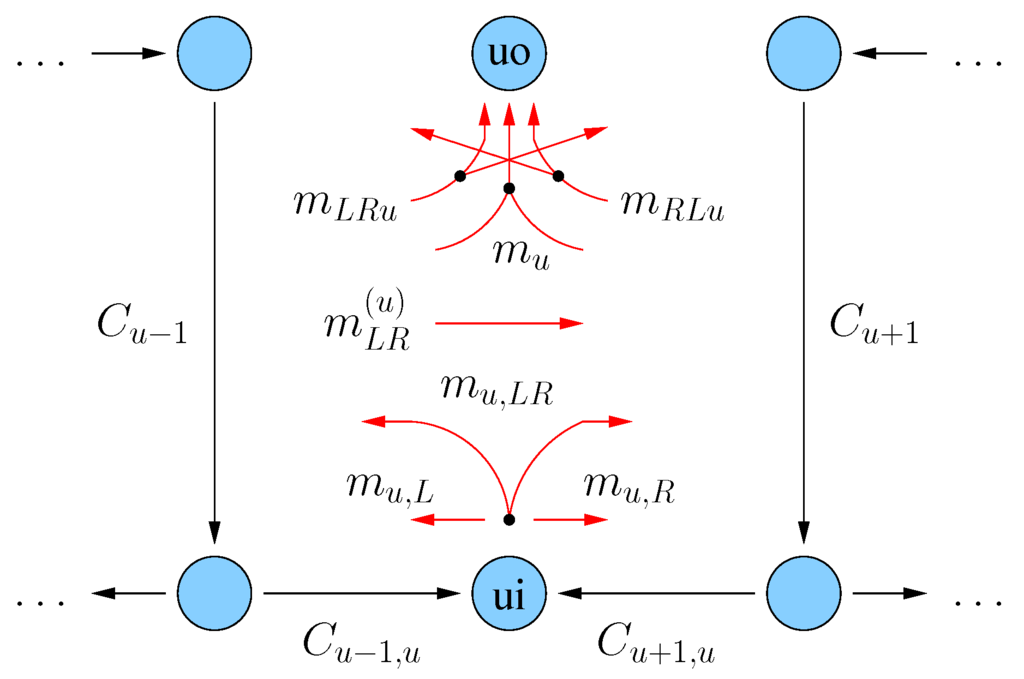

We chose s to satisfy the following inequalities. The size of the domain is $ n^r $ and the size of the range is $ m^s $. The range of E must be greater than or equal to the size of the domain or otherwise two different messages in the domain would have to map to the same encoding in the range. We also briefly described the source coding and data compaction. bits, where is the probability that is in the state, and is defined as 0 if. The (Shannon) entropy of a variable is defined as. In mathematics, a more abstract definition is used. In Section 6.1, we provided the definitions of entropy, joint entropy, conditional entropy, relative entropy, mutual information, and channel capacity, followed by information capacity theorem. Relative Entropy and Mutual Information in Gaussian Statistical Field Theory. In physics, the word entropy has important physical implications as the amount of 'disorder' of a system. $$L(m_A) = r, \space L(m_B) = s, \space m_B = E(m_A)$$ This chapter was devoted to the basic concepts of information theory and coding theory. To prove this is correct function for the entropy we consider an encoding $E: A^r \rightarrow B^s$ that encodes blocks of r letters in A as s characters in B. In this case the entropy only depends on the of the sizes of A and B. Basics of information theory We would like to develop a usable measure of the information we get from observing the occurrence of an event having probability p. Entropy is a scientific concept, as well as a measurable physical property, that is most commonly associated with a state of disorder, randomness, or uncertainty. And if event $A$ has a certain amount of surprise, and event $B$ has a certain amount of surprise, and you observe them together, and they're independent, it's reasonable that the amount of surprise adds.įrom here it follows that the surprise you feel at event $A$ happening must be a positive constant multiple of $- \log \mathbb \log_m(n)$$ It's reasonable to ask that it be continuous in the probability.

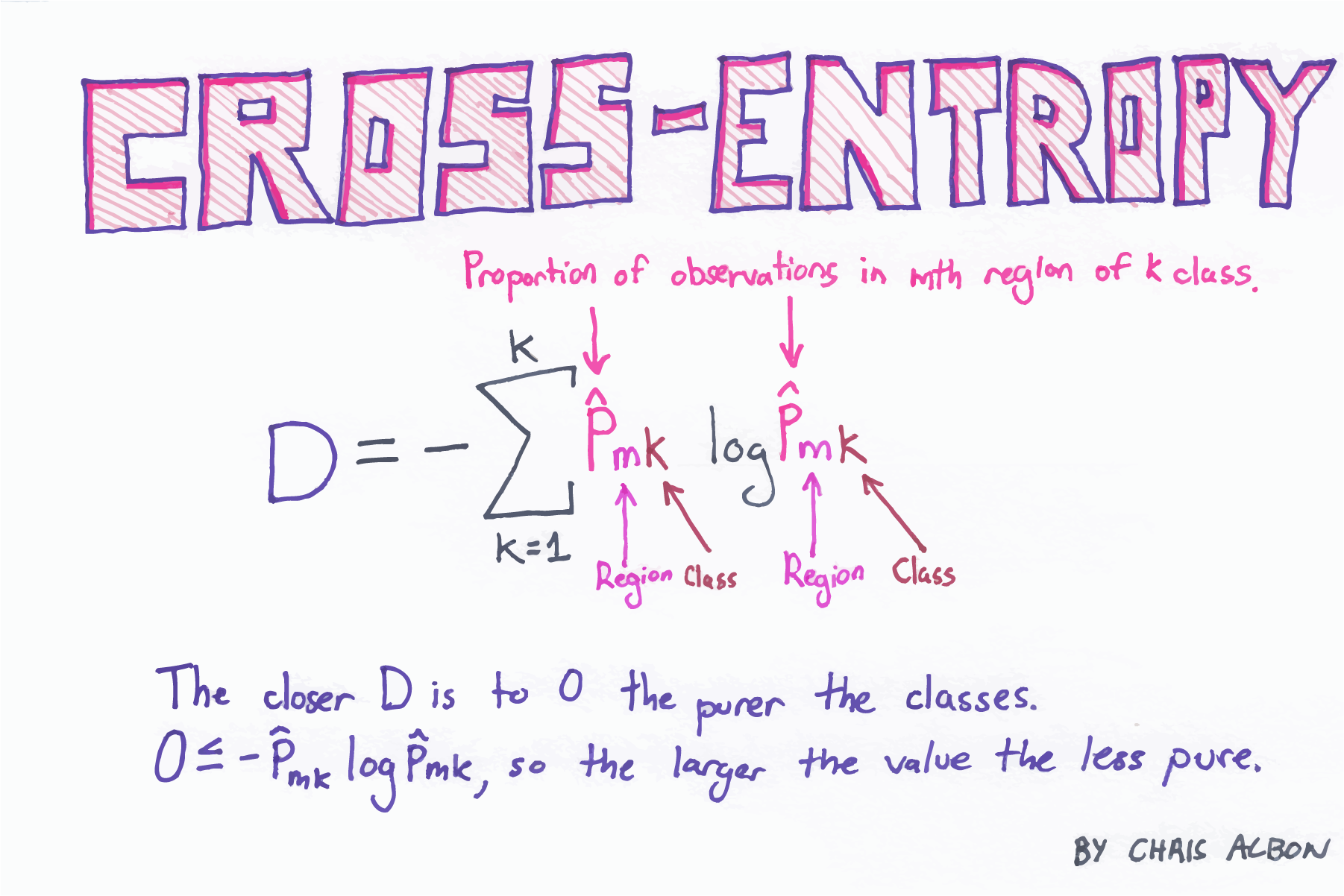

p 2) = I( p 1) + I( p 2): the information learned from independent events is the sum of the information learned from each event.How surprising is an event? Informally, the lower probability you would've assigned to an event, the more surprising it is, so surprise seems to be some kind of decreasing function of probability.I(1) = 0: events that always occur do not communicate information.I( p) is monotonically decreasing in p: an increase in the probability of an event decreases the information from an observed event, and vice versa.The amount of information acquired due to the observation of event i follows from Shannon's solution of the fundamental properties of information: To understand the meaning of −Σ p i log( p i), first define an information function I in terms of an event i with probability p i.

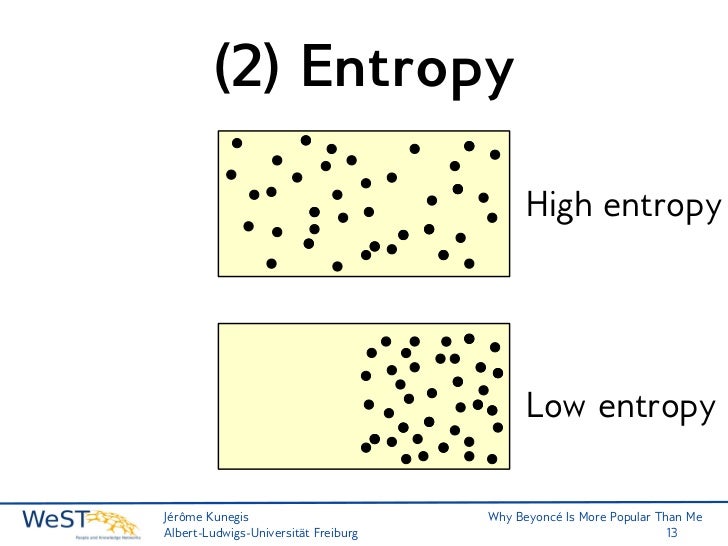

To calculate the entropy of a specific event X with probability P (X) you calculate this: As an example, let’s calculate the entropy of a fair coin. This ratio is called metric entropy and is a measure of the randomness of the information. To calculate information entropy, you need to calculate the entropy for each possible event or symbol and then sum them all up. : 14–15Įntropy can be normalized by dividing it by information length. Discrete, noiseless communication and the concept of entropy. The entropy is zero: each toss of the coin delivers no new information as the outcome of each coin toss is always certain. Generally, information entropy is the average amount of information conveyed by an event, when considering all possible outcomes. The extreme case is that of a double-headed coin that never comes up tails, or a double-tailed coin that never results in a head.

Entropy, then, can only decrease from the value associated with uniform probability. Electrostatic telegraphs (case study) The battery and electromagnetism. Uniform probability yields maximum uncertainty and therefore maximum entropy. Visual telegraphs (case study) Decision tree exploration. The higher the information gain, the better the split. The more the entropy is removed, the greater the information gain. H ( X ) := − ∑ x ∈ X p ( x ) log p ( x ) = E, The information gain for the above case is the reduction in the weighted average of the entropy. Shannon defined the quantity of information produced by a source-for example, the quantity in a message-by a formula similar to the equation that defines thermodynamic entropy in physics.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed